Hello. This is Alex Bakker with what’s important in the IT and business services industry this week.

If someone forwarded you this briefing, consider subscribing here.

The Validation Bottleneck

One way of thinking about AI adoption is this: there is a pool of work being done by people in an enterprise; other people examine that work and try to identify which work can be done by AI. Then they fund an R&D project to make AI do the work, and, if the R&D is successful, they end up with a production AI use case. In that model, we would expect a growing number of use cases in production, plus a number of failed experiments for which the tools proved insufficient.

But that is not what we see. We see many AI pilots where the R&D phase stretches from months into years, and the outcome remains unclear.

The explanation is that many pilots are stuck not because AI cannot produce an output but because the organization cannot validate the output at the speed and scale required for production. In this case, the limiting factor is not generation, it’s trust.

When people talk about “the pilot,” they often collapse three steps into one. First, getting the AI to produce outputs that are directionally usable for the task. Second, making sure the output fits into a real workflow where people will use it, exceptions can be handled and accountability is clear. Third, operating the proposed workflow repeatedly, at volume, with predictable quality and risk. The persistence of so many pilots suggests that many teams clear the first step – a pilot would end if the model produced nothing usable – but they stall in the second and third steps.

This is where data on BPO managed services provides a clean signal. BPO services contain many workflows where AI can help quickly: document intake, case routing, service desk triage, contact center summarization and customer communications. These use cases are high volume, repeatable and measurable. They are also environments where small error rates can turn into downstream costs. At scale, the same workflows that create savings also create large validation workloads.

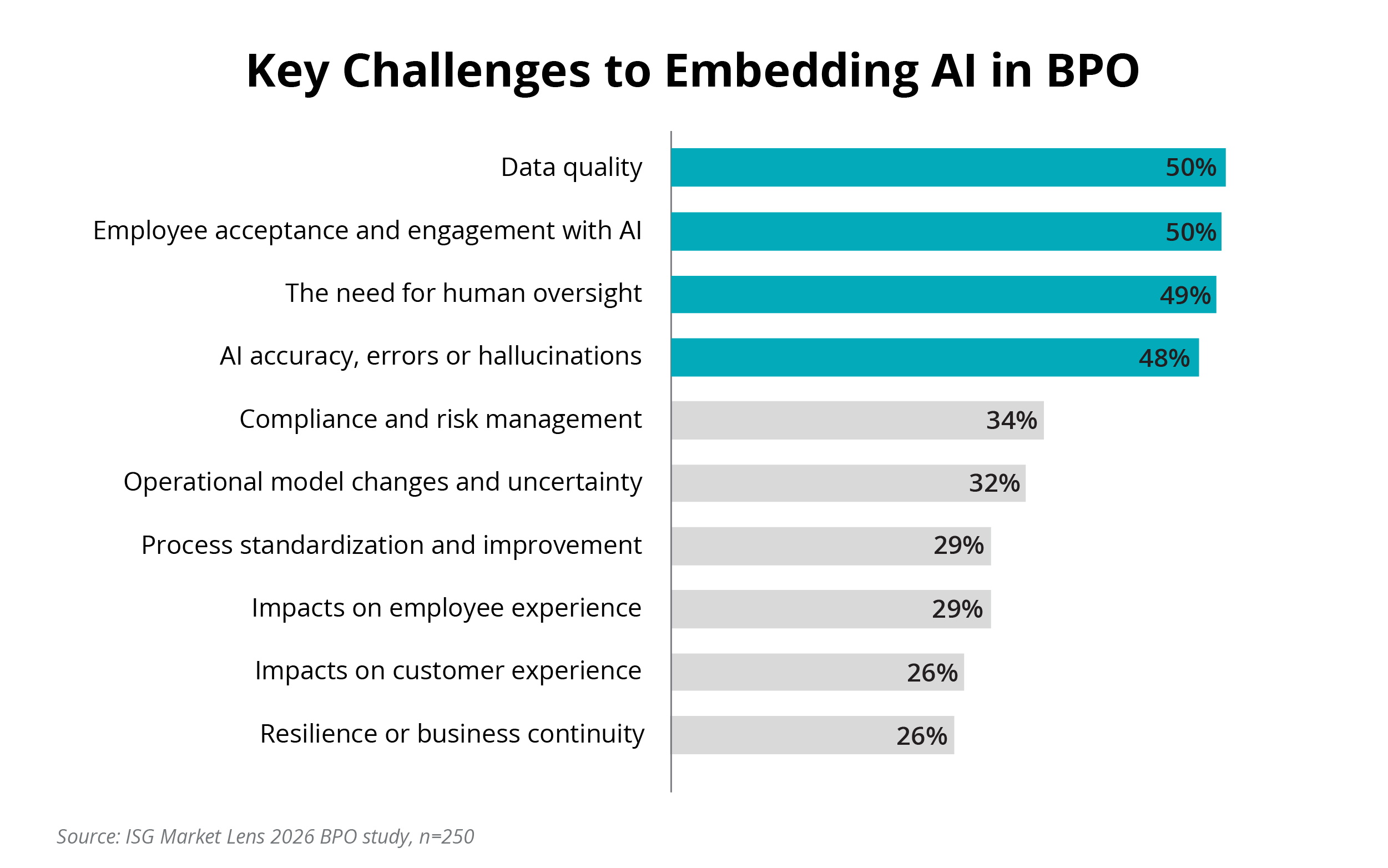

Our ISG Market Lens 2026 BPO Study highlights the challenges organizations face when they try to move from AI pilot to BPO service delivery. The top four challenges include data quality, employee acceptance and engagement, the need for human oversight and AI accuracy. All four point to the same constraint: validation capacity.

Data Watch

Data quality is an upstream dependency. If data is incomplete, inconsistent across teams or hard to access, the model fills the gaps with inference. This slows review because validators are reconstructing context, not only judging correctness.

Accuracy and hallucinations are a downstream symptom. The issue in production is not whether the model can be wrong; it is whether the workflow can detect and contain the errors that matter, especially plausible errors that look correct at a glance. Those are the errors that force organizations to keep humans in the loop and to keep the scope small or the volume low.

Human oversight is the mechanism for detection and containment. This is where scale breaks. AI output can increase instantly, but human validation capacity cannot. The people who can validate are SMEs, QA analysts and compliance reviewers who run delivery, handle escalations and manage SLAs. When validation becomes an added layer of work, the program slows down.

Employee acceptance determines whether the oversight actually works. If frontline teams do not trust the tool, they either bypass AI or over-review it, which eliminates any gains in productivity. Even when the tool is working, change management is hard without proof the solution is trustworthy.

For providers committed to AI savings, the gating factor is often the client’s ability to validate outcomes at scale.

Still, the biggest gap is in the AI operating model. Most AI operating models treat validation as an implementation step, not a production resource. “Human in the loop” is framed as a safety feature or a temporary control and planned like a fixed-duration project, not a continuous service.

As providers increasingly use AI on behalf of clients, the language will change to match the reality. “Human in the loop” will give way to AI output validation services. These services will look like current services: defined scope by process and risk tier, explicit acceptance criteria, sampling and review rules, exception workflows, audit trails and clear accountability for outcomes. Validation moves from being an implied activity inside a pilot to being an explicit layer of delivery.

This is the practical path from pilots to scale. The constraint is not that AI cannot generate. The constraint is that enterprises and providers have not yet built enough production capacity to validate, govern and stand behind what the model produces.